PUBLICATION

Munk, A. K., Olesen, A. G., & Jacomy, M. (2022). The thick machine: Anthropological AI between explanation and explication. Big Data & Society, 9(1)

Full text available open access.

BibTex

@article{munk2022thick, title={The thick machine: Anthropological AI between explanation and explication}, author={Munk, Anders Kristian and Olesen, Asger Gehrt and Jacomy, Mathieu}, journal={Big Data \& Society}, volume={9}, number={1}, pages={20539517211069891}, year={2022}, publisher={SAGE Publications Sage UK: London, England }

I wrote this paper together with Asger Gehrt Olesen and Mathieu Jacomy for a conference on Machine Anthropology organized by Morten Axel Pedersen at the University of Copenhagen in 2020. The papers from the conference later became part of a special issue in Big Data & Society, also edited by Morten.

The paper explores the possibility of repurposing supervised machine learning for so-called thick description, i.e. the interpretation of cultural meaning in context.

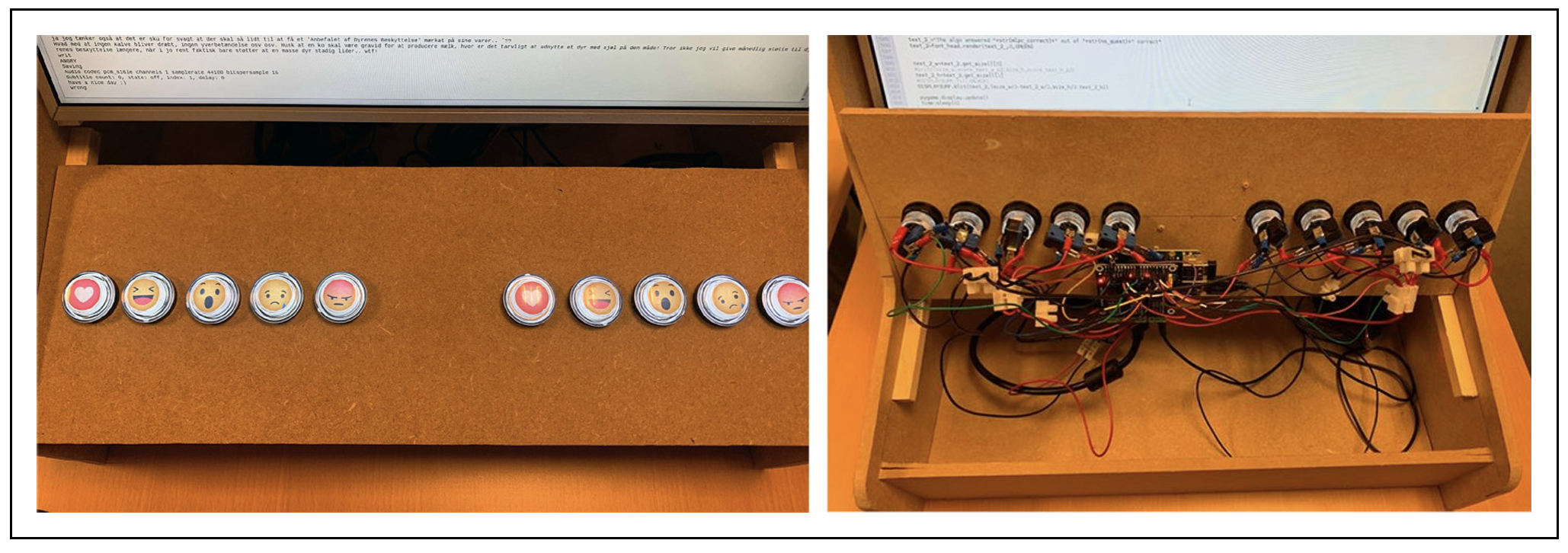

We go back to the debate between Clifford Geertz (who made thick description famous) and his contemporary ethnoscientists and devise a game (physical arcade style, see below) in which humans have to compete with a machine learning classifier to predict emoji reactions in online political discourse.

The game draws on a dataset of public Danish Facebook pages (curated by Asger and myself before the 2018 API changes). Players are presented with a post and a comment from Facebook. They then have to guess how the commenter emoji reacted to the post.

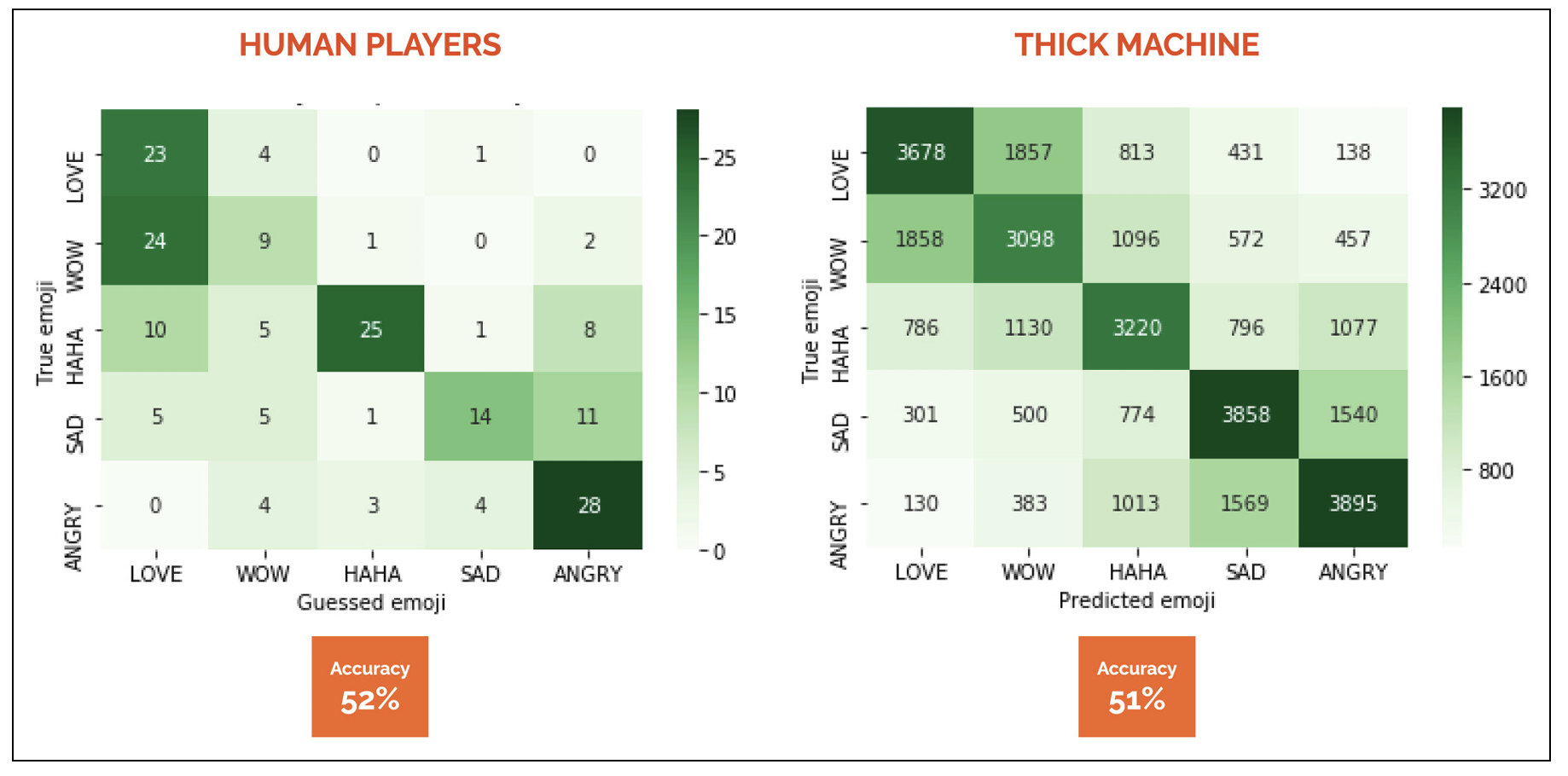

As it turns out, neither the human players nor the trained machine learning classifier are very good at it. Indeed, they are wrong in the same situations and when we investigate those situations they turn out to be deeper with conflicting layers of meaning.

This leads us to speculate, that machine learning mispredictions can be repurposed as a way to identify situations where thick description is required. Jill Rettberg has since reproduced this approach on different datasets and argues that it generalizes as a method for the humanities. She even made a nice video explainer about it.

This leads us to speculate, that machine learning mispredictions can be repurposed as a way to identify situations where thick description is required. Jill Rettberg has since reproduced this approach on different datasets and argues that it generalizes as a method for the humanities. She even made a nice video explainer about it.

Ultimately, we take the experiment as an impetus to discuss the way we frame the potential use of computational methods in anthropology. Particularly, we are critical of the way computation is often all too readily associated with formalist ambitions.

p.2

This antagonism between interpretative and explanatory ambitions is still at play in anthropological engagements with computation today, where it locates the use of algorithms solidly, but erroneously, with the latter and as antithetical to the former.

p.3

Both machine learners and interpretative anthropologists are in the business of attuning to native ways of ascribing meaning to a situation; both do so without a clear theory of how that happens, in the sense that machine learning is increasingly becoming unexplainable and interpretative anthropologists have always relied on a somewhat underspecified moment of clarity (also known as the “Geertzian moment”), where the field begins to make sense; both rely on immersion to accomplish their task. Neither machine learners nor interpretative anthropologists produce a set of cultural rules that allow them or others to explain anything; neither are capable of explaining their own process in precise terms. Contrary to interpretative anthropologists, machine learners are not born with an ambition to explicate anything to an external readership (explication taken here as distinctly different from explanation, namely, as the act of constructing a reading of a situation); however, given the commonalities laid out above, one could imagine the possibility that they could be of assistance in such a process. The standards by which we judge the usefulness of machine learning techniques in anthropological analysis should change quite dramatically if the objective is not, as Geertz put it, “to codify abstract regularities but to make thick description possible, not to generalize across cases but to generalize within them” (1973).

p.13

The situations where the machine fails come into view as situations with a real potential for thick description. When it is hard to train a classifier, it is typically because the situation is ambiguous. As soon as there is a play between multiple and overlapping frames of interpretation ingredient in the situation, both the machine and the players fail. Arguably, these failures could be understood as indications that here are situations worthy of being explicated.